I’ve written a few posts on how to speed up web sites by sending the correct headers to leverage browser caching and compressing .php, .css and .js files without mod_gzip or mod_deflate.

The intended audience for this post is developers who have already applied most or all of Google’s Web Performance Best Practices and Yahoo’s Best Practices for Speeding Up Your Web Site and are wondering how to speed up WordPress sites in particular.

I assume you’re familiar with WordPress caching and are already using a caching plugin, such as WP Super Cache, W3 Total Cache or the like.

Reduce HTTP requests

Reducing HTTP requests should be the very first thing step in speeding up any site. If you are using plugins, watch them carefully for inefficiencies like added CSS and JavaScript files. Combine, minify and compress these files. Some plugins allow you to turn off their bundled CSS in the plugin’s settings page. Where possible, copy the plugin’s CSS into the current theme’s style.css and turn off the extra file.

Delete deactivated plugins

Remove any plugins you’re not using. Deactivated plugins can be deleted from the Plugins page.

Speed up the mod_rewrite code

jdMorgan from WebmasterWorld.com has written a replacement for the .htaccess rewrite rule used by WordPress. This will speed up the WordPress mod_rewrite code by a factor of more than two.

This is a total replacement for the code supplied with WP as bounded by the “Begin WP” and “End WP” comments, and fixes several performance-affecting problems. Notably, the unnecessary and potentially-problematic container is completely removed, and code is added and re-structured to both prevent completely-unnecessary file- and directory- exists checks and to reduce the number of necessary -exists checks to one-half the original count (due to the way mod_rewrite behaves recursively in .htaccess context).

http://www.webmasterworld.com/apache/4053973.htm

The following code is adapted from the original to add png, flv and swf to the list of static file formats:

# BEGIN WordPress

# adapted from http://www.webmasterworld.com/apache/4053973.htm

#

RewriteEngine on

#

# Unless you have set a different RewriteBase preceding this point,

# you may delete or comment-out the following RewriteBase directive

# RewriteBase /

#

# if this request is for "/" or has already been rewritten to WP

RewriteCond $1 ^(index\.php)?$ [OR]

# or if request is for image, css, or js file

RewriteCond $1 \.(gif|jpg|png|ico|css|js|flv|swf)$ [NC,OR]

# or if URL resolves to existing file

RewriteCond %{REQUEST_FILENAME} -f [OR]

# or if URL resolves to existing directory

RewriteCond %{REQUEST_FILENAME} -d

# then skip the rewrite to WP

RewriteRule ^(.*)$ - [S=1]

# else rewrite the request to WP

RewriteRule . /index.php [L]

#

# END wordpress

Only load the comment-reply.js when needed

In the default WordPress template, the comment-reply.js script is included on all single post pages, regardless of whether nested/threaded comments is enabled. A simple tweak changes the theme to only include comment-reply.js on single post pages only when it makes sense to do so: if threaded comments are enabled, commenting on that post is allowed, and a comment already exists.

Remove the following line from your theme’s header.php:

<?php if ( is_singular() ) wp_enqueue_script( 'comment-reply' ); ?>

Add the following lines to your theme’s functions.php.

// Don't add the wp-includes/js/comment-reply.js?ver=20090102 script to single post pages unless threaded comments are enabled

// adapted from http://bigredtin.com/behind-the-websites/including-wordpress-comment-reply-js/

function theme_queue_js(){

if (!is_admin()){

if (is_singular() && (get_option('thread_comments') == 1) && comments_open() && have_comments())

wp_enqueue_script('comment-reply');

}

}

add_action('wp_print_scripts', 'theme_queue_js');

Only load the l10n.js when needed

In WordPress 3.1, a l10n.js script is loaded. It is “mostly used for scripts that send over localization data from PHP to the JS side using wp_localize_script().” Whether it’s safe to remove this file seems to be a matter of debate, but should you decide you want to remove it…

Add the following lines to your theme’s functions.php.

// Don't add the wp-includes/js/l10n.js?ver=20101110 script to non-admin pages

// adapted from http://wordpress.stackexchange.com/questions/5451/what-does-l10n-js-do-in-wordpress-3-1-and-how-do-i-remove-it

function remove_l10n_js(){

if (!is_admin()){

wp_deregister_script('l10n');

}

}

add_action('wp_print_scripts', 'remove_l10n_js');

Replace unecessary code executions and database queries

WordPress saves settings specific to your blog in the database. These settings include what language your blog is written in, whether text is read left-to-right or vice versa, the path to the template directory, etc.

The default WordPress theme contains a number of database queries in order to figure out these things and build the correct page. The default theme needs this flexibility, but your theme does not. Joost de Valk recommends replacing these database queries with static text in your theme files in his post Speed up WordPress, and clean it up too!

An easy way to do this is to browse to your web site and then view the source code. Copy the content that won’t change from page to page and paste it into your theme, overwriting the PHP with the generated HTML.

For example, my theme’s header.php file contains this line:

<html xmlns="http://www.w3.org/1999/xhtml" <?php language_attributes(); ?>>

The source code of the rendered page displays this line:

<html xmlns="http://www.w3.org/1999/xhtml" dir="ltr" lang="en-US">

On my blog, this HTML output is never going to be anything different, so why make WordPress look these settings up in the database each time a page is loaded? This line is an excellent candidate for replacement. The footer.php file contains a handful more opportunities for replacement, but go through each of your theme’s files and look for references to the template directory and other data that won’t change as long as you’re using the theme. All told, I was able to replace 12 database queries with static HTML.

Joost also recommends checking for unnecessary or slow database queries, and installing a helpful debugging plugin, in his post on Optimizing WordPress database performance.

Clean out your MySQL database

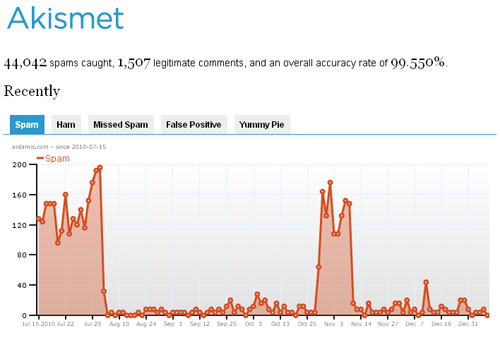

Delete spam comments

From the Dashboard, click Comments, then click on Empty Spam.

Delete post revisions

If you don’t use post revisions, you may want to delete them from the wp_posts table. Back up your database, then run the following SQL query:

DELETE FROM wp_posts WHERE post_type = "revision";

Before: 683 records

After: 165 records

This does not delete the latest draft of unpublished posts. It’s a good idea to optimize the table after deleting the revisions.

You can stop WordPress from saving post revisions by adding the following code to wp-config.php:

define('WP_POST_REVISIONS', false );

Optimize your MySQL database

Optimizing your MyISAM tables is comparable to defragmenting your hard drive. It’s probably been a while since you’ve done that, too.

If you’re using phpMyAdmin, browse to your WordPress database. Under the Structure tab, at the bottom of the list of tables, click on the link “Check all”. In the “With selected” menu, choose “Optimize table”. (I would have recommended just optimizing tables that have overhead, but the wp_posts table can be optimized even when it doesn’t show any overhead.

For MyISAM tables, OPTIMIZE TABLE works as follows:

- If the table has deleted or split rows, repair the table.

- If the index pages are not sorted, sort them.

- If the table’s statistics are not up to date (and the repair could not be accomplished by sorting the index), update them.

http://dev.mysql.com/doc/refman/5.1/en/optimize-table.html

Flush the Buffer Early

When users request a page, it can take anywhere from 200 to 500ms for the backend server to stitch together the HTML page. During this time, the browser is idle as it waits for the data to arrive. In PHP you have the function flush(). It allows you to send your partially ready HTML response to the browser so that the browser can start fetching components while your backend is busy with the rest of the HTML page. The benefit is mainly seen on busy backends or light frontends.

http://developer.yahoo.com/performance/rules.html#flush

Add flush() between the closing

</head>

<?php flush(); // http://developer.yahoo.com/performance/rules.html#flush ?>

<body>

OK, so this isn’t technically a WordPress-specific tweak, but it’s a good idea.